เว็บหวยออนไลน์ 999LUCKY150 เว็บชั้นนำอันดับ 1 ที่มีอัตราการจ่ายแพงที่สุดในประเทศ 2022

ยินดีตอนรับทุกท่าน เข้าสู่ เว็บหวยชั้นนำ เเละมาเเรงที่สุดในประเทศ 2022 เว็บแทงหวย 999lucky นับได้ว่าเป็น เว็บแทงหวย ที่มีผู้คนให้ความสนใจ และนิยมเล่นกันมากในตอนนี้ เนื่องทางเว็บมีการบริการที่สะดวกสบายง่ายต่อการแทงของลุกค้า

สำหรับ เว็บแทงหวยออนไลน์ ในทุกวันนี้ นั้นมีมากมาย จนแถบมองไม่รู้เลยว่า เว็บไหนที่มีมาตฐาน มีความมั่นคง ปลอดภัย จ่ายจริงไม่มีประวัติการโกงลูกค้าทางเราจึงขอ แนะนำเว็บที่คอหวยให้ความไว้วางใจ 999lucky เว็บแทงหวยออนไลน์ ที่มาเเรงที่สุดในตอนนี้ 2022 มีการบริการที่รวดเร็ว ทันใจมีระบบ ฝาก–ถอน อัตโนมัตที่ทันสมัยเก่าเว็บอื่นๆ อย่างเเน่นอน !

ที่สำคัญทางเว็บของเรานั้น ได้นำ หวยรัฐบาล หวยยี่กี หวยฮานอย หวยใต้ดิน มาให้ ลูก ค้าทุกท่าน ได้เพลิดเพลิน ในการแทงเเละ ยังสามารถแทงผ่าน ทางเข้าหน้าเว็บ 999lucky ได้เลยและทางเราขอรับรองเลยว่าทางเราจะคอยดูเเลsupport ลูกค้าทุกท่านเสมือนเป็นลูกค้า vip ทุกคนเท่าเทียมกันอย่างแน่นอนครับ ติดต่อ-สอบถาม สมัครแทงหวย 999LUCKY !

999LUCKY เว็บแทงหวยออนไลน์ ที่มีผู้คนเข้ามาใช้บริการนับแสนรายการเงินมั่นคง 100 %

สมัครแทงหวย 999LUCKY150 วันนี้ไม่มีค่าใช้จ่ายใดๆ ทั้งสิ้น มิติใหม่ของวงการหวยที่ดีที่สุด 2022

999lucky150 เว็บหวยออนไลน์ชั้นนำที่ครบวงจรที่ดีที่สุด ได้รับการยอมรับมากที่ สุดสำหรับนักเสี่ยงโชคในไทยมี หวยออนไลน์ ให้ท่านเลือกเล่นมากมาย คัดสรรค์หวยที่ดีที่สุดทั่วโลกมาให้ท่านได้เล่นกันอย่างจุใจ เเละเพลิดเพลิน ครับ สนใจสมัครแทงหวย !

และไม่ว่าจะเป็น หวยรัฐบาล หวยจับยี่กี หวยหุ้นไทย หวยหุ้นต่างประเทศ หวยสิงคโปร์ หวยลัคกี้เฮง หวยฮานอยเช้า หวยฮานอยบ่าย หวยฮานอยดิจิตอล หวยมาเลย์พิเศษ หวยมาเลกลันตัน หวยมาเล ดิจิตอล หวยมาเลเช้า หวยมาเลบ่าย หวยมาเล 5G หวยลาว VIP หวยลาวพิเศษ หวยลาว5D

หวยนิเคอิ Vip หวยนิเคอิ พิเศษ หวยนิเคอิ ดิจิตอล หวยฮังเส็ง Vip หวยฮังเส็งพิเศษ หวยฮังเส็งดิจิตอล เป็นต้น และยังมีเกมส์ คาสิโนออนไลน์ อีกมากมาย อาทิเช่น เสือมังกร ปั่นแปะ เป่ายิ้งฉุบ สล็อต รูเร็ต บาคาร่า และเกมส์กีฬาอื่นๆ อีกมากมาย !

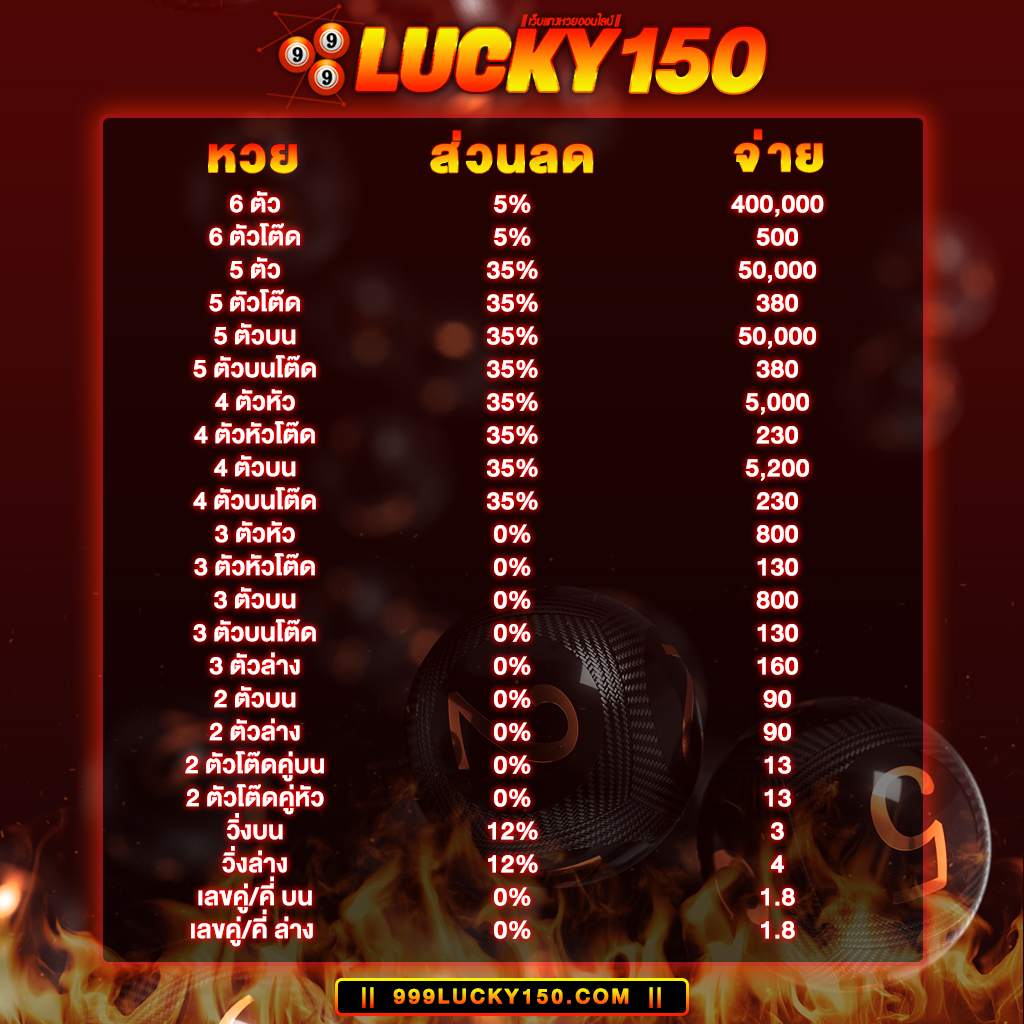

จุดเด่นของ หวยออนไลน์2021 999LUCKY คือเปิดบริการรับแทงหวย ซื้อหวยออนไลน์ชั้นนำ กับเราเพราะมีความมั่นคง ด้านการเงินปลอดภัย 100% แถมยังมี อัตราการจ่ายสูงถึงบาทละ 800 ส่วนลดเยอะจ่ายเงินแล้ว เล่นได้เงินจริง จ่ายเต็ม ไม่มีโกง แน่นอนครับ !

ท่านสามารถ แทงหวยออนไลน์ที่ครบวงจร ที่ได้เงินจริงและดีที่ที่สุด ได้ที่ 999LUCKY ที่นี่ที่เดียว !

- หวยลาว

- หวยฮานอย

- หวยเวียดนาม

- หวยหุ้นไทย

- หวยหุ้นต่างประเทศ

- หวยจับยี่กี

- หวยรัฐบาล

- หวยหุ้นสิงคโปร์

- หวยมาเลย์

- หวยปิงปอง

- หวยหุ้นดาวโจนส์

- หวยลัคกี้เฮง

ท่านสมาชิกยัง ตรวจเช็คผลรางวัล ผลหวยลาวฟรี ได้ที่ 999LUCKY150 ได้เลยที่ทางหน้าเว็บ ครับ !

- LaosThakhek.com

- LaosPakse.com

- LaosPhonsavanh.com

- Laossaibuli.com

- LaosXnuwng.com

- Laos4sekong.com

- Laospaksan.com

- LaosHuayXai.com

- LaosPhongsali.com

- LaosPhonhong.com

- Laos4salavan.com

- LaosGPamhihan.com

- LaosLNamtha.com

- LaosLPrabang.com

- LaosSumNuea.com

- LaosAttapeu.com

- laosMueangSai.com

- laosthakhek.com

ขอดีขอการสมัคร แทงหวยออนไลน์ กับ 999LUCKY150 มีดังนี้ !

- ฝาก-ถอนเงิน ด้วยระบบอัตโนมัติ รวดเร็วทันใจ

- ฝาก-ถอนเงิน ได้โดยไม่มีขั่นต่ำ

- ฝาก-ถอนเงิน กับเราได้ตลอด 24 ชั่วโมง

- มีโปรโมชั่น ดีดีให้ท่านได้ลุ้นรับมากมาย

- สมัครสมาชิก รับโบนัสสุดพิเศษ ฟรี! ทันที

- แทงหวยออนไลน์ได้โดยไม่มีขั่นต่ำ

- อัตราการจ่ายรางวัลสูงที่สุด

- อัตราการจ่ายสูงถึงบาทละ800

- รองรับระบบแทงหวยที่ทันสมัย เต็มรูปแบบ

- ระบบติดต่อทีมงานเจ้าหน้าที่ ติดต่อทีมงานเราได้ตลอด 24 ชม.

- ระบบรักษาความปลอดภัยขั้นสูง รักษาความปลอดภัยได้หนาแน่นที่สุด

- ใช้ระบบ Cloud Server เต็มรูปแบบได้มาตรฐานที่สุด

- ใช้ระบบสำรองข้อมูลของท่านลูกค้าแบบ Ail Time ไม่ต้องกลัวว่าเงินท่านจะหาย

- ระบบแนะนำสมัครสมาชิก จ่ายค่าตอบแทนสูงสุดถึง 5%

- ผลหวยปิงปอง ออกผลหวยด้วยตัวเองได้

โปรโมชั่นแจกจริง ให้จริงกับ เว็บหวยออนไลน์ชั้นนำของเมืองไทย ต้อง 999LUCKY เท่านั้น !

- มีโบนัส สุดพิเศษ แจกฟรี!

- แทงหวย ส่วนลดเยอะที่สุด

- ระบบแนะนำเพื่อน AFF รับเปอร์เซ้นสูงถึง 5%

- ซื้อหวยออนไลน์ จ่ายไม่อั้น ไม่มีเลขอั้น

- จ่ายจริง จ่ายเต็ม จ่ายหนัก ไม่โกง

- การเงิน มั่นคง ปลอดภัย ไว้ใจได้ 100%

- ฝากถอนด้วยระบบอัตโนมัติ

- มีโปรโมชั่นและสิทธิพิเศษอีกมากมา

- แทงหวยได้ไม่มีขั่นต่ำ

- เริ่มต้นแทงหวยขั่นต่ำเพียง 1 บาทเท่านั้น

- ระบบสำรองข้อมูลลูกค้าแบบ Ail Time ไม่ต้องกลัวว่าเงินจะหาย

โปรดอ่านก่อนตัดสินใจ ถ้าท่านไม่อยากตกเป็นเหยื่อ เว็บหวยโกงหวย เหล่านี้

เฮียรุ่ง ยอดเงินเครดิตหายไป

เฮียมด โกงไหมตอบเลยว่าโกงแน่นอน 100%

Jackpot666 แจ้งถอนเงินออกมาไม่ได้

Lottopoipet ปิดตัวลงไปอย่างถาวร

Sboibc888 โดนบล็อคช่องทางเข้า

หวย999 การเงินไม่ปลอดภัย

Lottopoon สมาชิกโดนแบนยูสเซอร์กันเป็นจำนวนมาก

Deewowlotto เว็บล่มบ่อยมาก

Hoy111 เซิฟเวอร์ยังไม่ได้มาตรฐานพอ

Chadalotto จ่ายครึ่งราคา

โชค77 โกงผลหวยสมาชิก

สมัครฟรีวันนี้ ลุ้นรับ สิทธิพิเศษ มากมาย จากทางเว็บพร้อม โปรโมชั่น สำหรับลูกค้าใหม่ เท่านั้นนะ ครับ !

ติดต่อทีมงาน 999LUCKY ได้ตลอด 24 ชั่วโมง / บริการงานโดย เฮียบิ๊ก @ ปอยเปต

มั่นคง ปลอดภัย จ่ายจริง ไม่โกง ระบบ ฝาก-ถอน รวดเร็วทันใจ ต้องที่นี่ที่เดียวเท่านั้น

หวยลาวท่าแขก | หวยมาเลกลันตัน | หวยหุ้นนิเคอิ | หวยรัฐบาล | หวยลัคกี้เฮง